Enterprise AI training spending has reached unprecedented levels. Internal academies, certification programs, vendor partnerships, executive briefings on prompt engineering. Organizations are investing more in AI enablement than at any point in the technology's commercial history.

The results tell a different story.

Between 70% and 85% of AI initiatives fail to meet expected outcomes1. In 2025, 42% of companies abandoned most of their AI initiatives, up from 17% in 20242. Meanwhile, only one-third of companies prioritize change management and training as core components of their AI rollouts3. Formal learning hours per employee have declined to 13.7 hours in 2024, down from 35 hours in 20204.

The correlation runs counter to expectation. Investment increases while outcomes deteriorate.

This pattern does not reflect insufficient intelligence among knowledge workers, inadequate tooling from vendors, or lack of executive commitment to transformation. The issue lies in the adoption model itself. Organizations are approaching AI fluency as if it were a knowledge transfer problem when the evidence suggests it functions more like language acquisition. Classroom instruction may provide vocabulary and grammar. But real fluency requires immersion.

The Structural Barriers to Training-Based Adoption

Three structural characteristics of enterprise organizations systematically undermine training-based AI adoption, regardless of program quality or design sophistication.

Time Pressure Eliminates Space for Experimentation

Knowledge workers operate under competing pressures that make sustained experimentation with new workflows functionally impossible. Quarterly performance metrics, campaign delivery schedules, board reporting cycles, and customer escalations create environments where reverting to proven processes becomes the rational choice under deadline pressure.

Research indicates the average employee experienced ten planned enterprise changes in 2022, up from just two in 20165. In environments saturated with change initiatives, individuals default to established practices when performance pressure intensifies. AI workflows learned in training sessions get deprioritized the moment a real deadline materializes. Organizations cannot reasonably expect employees to adopt new methodologies while simultaneously measuring them against performance benchmarks optimized for old processes.

Generic Training Does Not Transfer to Context-Specific Workflows

Most enterprise AI training focuses on general principles. How to construct effective prompts. Common use cases across marketing functions. Theoretical frameworks for AI integration. These programs deliver conceptual understanding but struggle to bridge the gap between abstract knowledge and specific application.

When a content manager sits down to draft a campaign with 90 minutes before their next obligation, they are not thinking about theoretical use cases. They need a workflow that solves the immediate problem at hand. Research on blended learning demonstrates that 15-minute modules with integrated hands-on practice consistently outperform 30-45 minute lectures in both engagement and retention6. However, even optimal training design cannot fully bridge the distance between conceptual understanding and context-specific execution. Training provides knowledge about what AI can do. It does not automatically translate that knowledge into muscle memory for how to do daily work differently.

The Knowing-Doing Gap in Organizational Behavior

AI proficiency is not fundamentally about knowledge acquisition. It is about sustained behavior change in how work gets executed. This distinction maps directly to what organizational researchers identify as the knowing-doing gap, the persistent phenomenon where individuals possess clear knowledge of what they should do but fail to act consistently with that knowledge.

Quantitative research reveals a strong negative correlation between resistance to change and acceptance of new practices, with a coefficient of -0.646. This relationship is mediated by trust in management and perceived organizational support, suggesting that adoption depends less on information transfer and more on demonstrated proof that new approaches actually work in real conditions. Organizations that conduct pre-implementation resistance assessments achieve 40% higher acceptance rates than those that skip this step7.

The implication is clear. People need to observe new workflows succeeding in contexts that matter to them, demonstrated by colleagues they trust, before widespread adoption becomes viable. Training programs that treat AI adoption as pure knowledge transfer systematically underestimate this behavioral dimension.

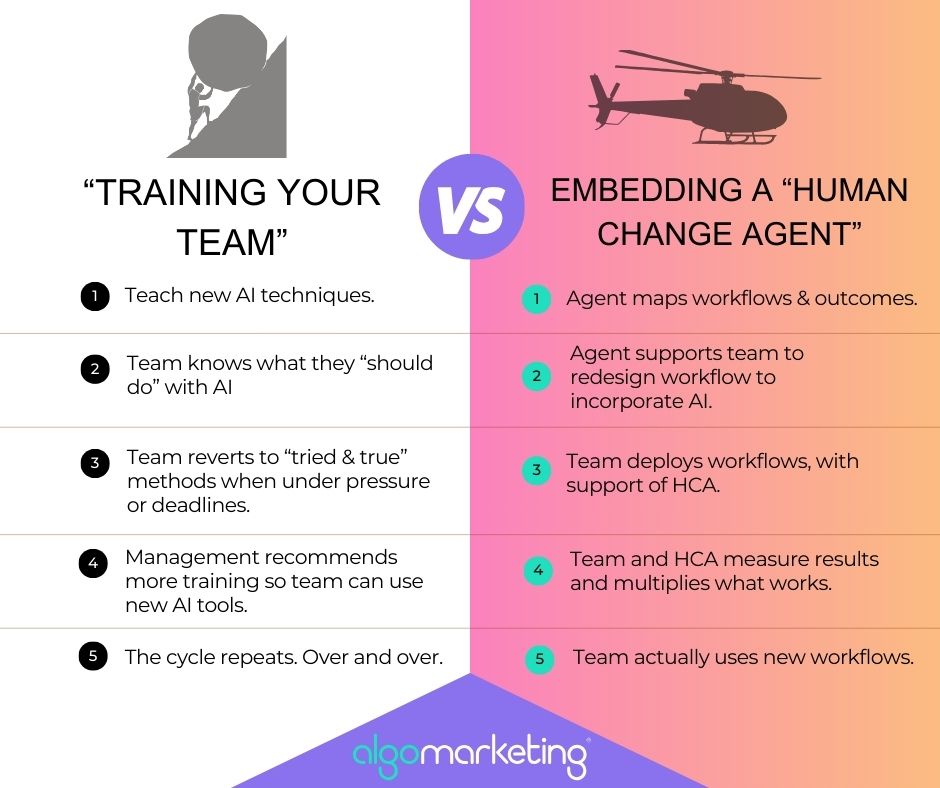

The Catalyst Model: Embedding Fluency Rather Than Teaching It

A different approach has emerged in organizations that successfully scale AI adoption. Rather than attempting to train entire teams to fluency, these organizations embed individuals who already operate at AI-fluent levels. These embedded specialists function as catalysts in the chemical sense. They lower the activation energy required for workflow transformation, accelerate adoption timelines that would otherwise span years, and crucially, they do not need to remain permanently integrated into the team structure to sustain the change they facilitate.

Organizations with formal change champion networks achieve 68% higher change acceptance rates and 40% faster time-to-adoption compared to organizations without such structures8. However, effective change champions are not individuals who completed certification programs and received formal designation. They are practitioners who already work in AI-fluent ways and can demonstrate tangible results to colleagues facing similar challenges.

Research on peer influence in organizational settings shows that workers with higher task similarity to their colleagues experience stronger peer effects when adopting new practices9. Adoption spreads through demonstrated success among peers, not through top-down mandate. This insight fundamentally reframes how organizations should approach AI enablement.

The Human Change Agent model operationalizes this insight. These are not external consultants who deliver recommendations and disengage. They are specialists who embed temporarily inside teams, work on actual business problems alongside existing employees, and transform workflows from within through direct collaboration.

The Three-Phase Deployment Pattern

Effective Human Change Agent engagements follow a consistent three-phase pattern, regardless of functional area or industry vertical.

Phase one involves mapping existing workflows with granular specificity. Human Change Agents observe how individual contributors currently execute their responsibilities. For example: What does the content development process actually look like from assignment to publication? How does campaign production flow from brief to deployment? Where do manual processes consume disproportionate time relative to value created? The focus is not on theoretical workflows documented in process manuals but on actual practices as they occur in daily operations.

The critical question in this phase is diagnostic: which elements of current workflows do practitioners wish they could eliminate or automate? This question identifies pain points with immediate operational relevance rather than abstract opportunities for improvement.

Phase two involves workflow redesign with AI integration as the default state rather than an optional enhancement. Human Change Agents do not provide generic advice about where to apply existing tools. They build production-ready solutions tailored to the specific context they have observed. For example: A custom AI application that generates first-draft content using organizational voice and internal knowledge bases. An automated system that qualifies event attendees and executes personalized outreach at scale. An analytics engine that identifies high-potential accounts below engagement thresholds and recommends specific conversion actions.

Only 26% of organizations possess the capabilities required to advance AI pilots from proof-of-concept to production deployment10. The constraint is operational rather than technical. Many teams can construct demonstrations. Few can build solutions that integrate seamlessly into daily workflows and sustain usage over time.

Phase three focuses on deployment, measurement, and knowledge transfer. Human Change Agents do not simply deliver solutions and disengage. They support initial adoption, facilitate iterative refinement based on actual usage patterns, measure impact against relevant performance metrics, and document both the solution architecture and the transformation process. This documentation becomes an organizational asset that enables replication and adaptation across other teams and functions.

This creates conditions for what researchers describe as viral adoption. When team members observe colleagues achieving measurably superior results through new workflows, demand for access to those workflows spreads organically. The role of the Human Change Agent shifts from building solutions to adapting proven approaches for different contexts.

Implementation Evidence: Engagement Optimization at Google

João Cardoso, working with Google's xWF team, encountered a challenge common across enterprise marketing organizations. Sales and marketing teams had identified accounts approaching the engagement threshold for "highly engaged" status, but lacked systematic methods for determining which interventions would most efficiently drive accounts across that threshold.

The conventional response would have involved increasing activity volume. More webinar invitations. Higher email cadence. Broader event participation. This approach optimizes for activity rather than outcome.

João constructed a prototype web application that operationalized a data-driven alternative. The application ingests engagement point gaps, contact composition data across organizational hierarchy levels, and historical activity patterns. It identifies accounts with high conversion probability and generates tailored recommendations specifying which stakeholders to prioritize and which engagement types would most likely generate the required threshold movement.

This was not a consultant delivering strategic recommendations for what the organization should build. This was an operator embedded within the team, constructing the solution directly, and deploying it into production systems.

The operational impact manifested across multiple dimensions. Teams shifted from diffuse activity allocation to strategic account prioritization. Planning processes moved from reactive event scheduling to proactive, data-informed engagement design. Even modest improvements in conversion rates for previously overlooked accounts translate to significant incremental revenue at enterprise scale.

The adoption metric proved most instructive. While still in prototype form, the application changed how teams conceptualized engagement planning. Not through mandate or training, but through demonstrated utility in solving real problems more effectively than existing approaches.

Four Mechanisms That Enable Catalyst-Based Adoption

The catalyst model succeeds where training-based approaches stall because it operates through different mechanisms of behavior change.

Peer Influence Versus Hierarchical Mandate

Adoption does not spread primarily through executive directive or formal policy. Research on organizational change demonstrates that individuals adopt new practices when they observe colleagues in similar roles achieving superior outcomes. The mechanism is social proof rather than compliance. When team members see another team member producing better results through different workflows, the question shifts from "should we adopt this?" to "how quickly can we access this?"

Workflow Redesign Versus Tool Deployment

Most AI adoption initiatives follow a tool-first logic. Deploy the technology platform, provide access credentials, offer basic training, and expect utilization. The catalyst model inverts this sequence. It begins with workflow analysis, redesigns processes with AI as the foundational assumption rather than an optional add-on, and makes the underlying technology essentially invisible to end users. The team adopts a new way of working. The fact that AI powers that new workflow becomes incidental.

Embedded Integration Versus External Advisory

External consultants and training providers operate as temporary visitors to the organization. They deliver recommendations, conduct workshops, and disengage. Human Change Agents function as temporary colleagues. They participate in daily standups, communicate through internal channels, and collaborate on actual deliverables under real deadlines. The relationship is peer collaboration rather than expert instruction. This structural difference fundamentally changes how knowledge transfer occurs.

Reusable Infrastructure Versus Isolated Wins

Each workflow transformation generates documented assets that become organizational infrastructure. Implementation specifications, configuration templates, integration code, usage documentation, and performance benchmarks. These artifacts exist in accessible repositories where other teams can discover, evaluate, and adapt them for different contexts. Single deployments become replicable patterns. Initial investments in transformation compound as the catalog of proven solutions expands.

Strategic Implications for Enterprise Marketing Leaders

The average organization abandons 46% of its AI proof-of-concepts before they reach production deployment11. This pattern does not primarily reflect technical limitations in AI capabilities or insufficient investment in enabling technologies. It reflects a fundamental mismatch between how organizations attempt to drive adoption and how adoption actually occurs in complex operational environments.

For marketing leaders evaluating AI strategy, the relevant question is not "how do we train our team on AI?" The question is "what infrastructure do we need to deploy AI-fluent capability when specific opportunities or challenges emerge?" This reframing shifts focus from knowledge transfer to capability access.

Organizations that solve the capability access problem will compound their competitive position across successive planning cycles. More workflows operating at AI-enabled efficiency. More team members fluent in AI-native approaches. More documented solutions ready for replication. The performance gap between these organizations and competitors still pursuing training-based adoption will expand with each iteration.

Success in AI adoption increasingly resembles language immersion rather than language study. Classroom instruction provides grammatical rules and vocabulary lists. Fluency emerges from sustained exposure to native speakers in contexts where communication serves real purposes. The same pattern governs organizational AI adoption. Training provides conceptual frameworks and theoretical understanding. Fluency develops through proximity to practitioners who already operate in AI-fluent ways, working on problems that matter, under actual constraints.

The executives who recognize this distinction and restructure their talent deployment models accordingly will establish advantages that training budgets alone cannot replicate. Not because they spend more on enablement, but because they have fundamentally different models for how capability enters and spreads through their organizations.

The Path Forward

AI fluency does not scale through classroom instruction. It scales through embedded catalysts who lower activation energy for change, through peer networks that propagate demonstrated success, and through infrastructure that makes proven solutions discoverable and adaptable.

Organizations still attempting to train their way to AI proficiency will find the gap between aspiration and execution widening rather than narrowing. The volume of training will increase. The sophistication of content will improve. But adoption rates will remain stubbornly low because the model misunderstands the nature of the problem.

The alternative exists and functions reliably. Embed specialists who already work in AI-fluent ways. Have them collaborate with existing teams on real business challenges. Transform workflows rather than deliver training. Document what works. Enable replication across the organization. Measure success by workflow transformations rather than training completion rates.

Algomarketing has operationalized this model through Human Change Agent deployments across enterprise marketing organizations. These specialists embed temporarily within teams, build production-ready AI solutions collaboratively, and transfer capability through direct work rather than abstract instruction. The typical engagement compresses what traditional training approaches would require months to achieve into focused transformation cycles measured in weeks.

For marketing leaders interested in piloting the catalyst model, the approach is straightforward. Identify high-impact workflows where AI integration would generate measurable performance improvements. Deploy an AI-fluent specialist to work alongside the team executing those workflows. Build, measure, document, and scale what works.

AI fluency is not taught through presentation decks and certification programs. It is transferred through proximity, collaboration, and demonstrated success on problems that matter. The organizations that internalize this insight will build compounding advantages. Those that continue pursuing training-based adoption will continue generating the same disappointing statistics that characterize the current landscape.

The choice is becoming increasingly clear. The data already tells us which approach works.