Three months after your marketing ops hire started, you're still manually pulling reports.

The attribution model rebuild you promised the board in Q2? Still on the roadmap.

The automated lead routing that was supposed to free up 15 hours per week? Someone is still doing it in spreadsheets.

The AI-driven campaign workflows that would let you scale without adding headcount? They exist as a deck in someone's Google Drive.

Your hire isn't incompetent. They show up and they do use the AI tools daily. They've got a great prompt to get ChatGPT to analyze performance data, generate email variants, and summarize campaign results.

But they can't architect the systems that would actually scale your marketing operations.

The average ramp time for SaaS roles is 5.7 months in 2025, up 32% from 4.3 months in 2020 ¹. Month seven arrives. The gap between what you hired them to do and what they can actually deliver hasn't closed. It won't.

The AI project failure rate is 80%, twice the rate of traditional IT projects ² . Only 48% of AI projects make it into production, taking an average of eight months from prototype to deployment ³.

Most organizations calculate the visible costs. Salary. Recruiting fees. Benefits. The US Department of Labor estimates a bad hire costs at least 30% of first-year wages ⁴. For a $120,000 marketing ops hire, that's roughly $140,000.

That's the tip of the iceberg.

Below the waterline: competitive ground lost while you ramped someone who can't deliver, team productivity drain as peers cover gaps, strategic paralysis as initiatives stall, procurement decisions that filtered out people who could actually solve your problems.

The real cost isn't what you paid. It's what you didn't gain.

The Iceberg: What You're Not Measuring

Five categories of hidden costs that most organizations never measure.

The Capability Debt

The total cost to ramp a new hire is estimated at three times their base salary ⁵. For a $120,000 marketing ops specialist, that's $360,000 before they reach full productivity.

If your hire can't actually architect AI systems, that ramp never completes. They plateau at 40% of what you need. Month seven arrives. They're still manually pulling reports. Still using spreadsheets for processes that should be automated. Still attending the same meetings without delivering the systems that would eliminate them.

You're accumulating capability debt that compounds monthly.

The Competitive Separation

Your hiring process took 44 days. Add 5.7 months of ramp time. That's seven months from job posting to productivity.

Your competitor deployed an AI-fluent specialist in two weeks.

By month three, they rebuilt their lead scoring model. Prospects route automatically based on behavioral signals, firmographic fit, and engagement patterns. By month five, they launched an attribution system that tracks every touchpoint from anonymous browse to closed-won. By month seven, they compressed campaign production time by 63%.

You're still interviewing for the role you posted in month one.

Only 5% of AI pilot programs achieve rapid revenue acceleration ⁶. Your competitor is in that 5%. They deployed real capability while others remained stuck in hiring paralysis.

The separation isn't seven months. It's the multiplicative advantage they gained from building, learning, and iterating while you waited. They captured market positioning you're trying to win back. They established customer relationships that created switching costs. They built data infrastructure that informs every decision they make.

The Organizational Drag

A bad hire doesn't just fail to contribute. They reduce the output of everyone around them.

Managers spend 17% of their time supervising a bad hire ⁷. For a marketing ops manager earning $150,000 annually, that's $25,500 per year diverted from strategic work to managing someone who can't deliver.

Your demand gen specialist was supposed to collaborate with the new hire on automated reporting. Instead, they're manually pulling data every Monday. That's four hours weekly. Your campaign manager designed workflows assuming AI-driven personalization would be ready by Q3. It's Q4. They're still manually segmenting audiences.

The attribution model rebuild gets pushed to next year. The content production system that would have tripled team output remains conceptual. The predictive lead scoring you promised the board? Still on the roadmap.

Team members who can deliver start questioning whether they're in the right place. The best people leave. You're back in hiring mode, except now you're replacing productive team members, not just the failed hire.

The Credibility Erosion

When you hire someone who can't build systems, you inherit failure patterns. Pilots that stall. Proof-of-concepts that never scale. Vendor demos that deliver nothing because your team can't integrate them.

Meanwhile, your CEO sees competitors deploying AI-powered campaigns. Your CFO questions why you're spending $85,521 per month on AI infrastructure (the 2025 enterprise average, up 36% from 2024)⁸ with no measurable return.

Digital transformation failures cost organizations an estimated $2.3 trillion annually ⁹. These aren't technology failures. They're capability failures.

When marketing ops can't deliver on AI promises, marketing loses budget, loses headcount, loses the strategic positioning that justifies investment. You become a cost center, not a growth driver.

The Procurement Trap

You finally identify someone who can architect AI systems. They quote $425 per hour for a 10-week engagement to build three automated workflows that will eliminate 22 hours of manual work weekly.

Procurement counters at $340 per hour.

The specialist declines. Organizations that lead with rate negotiation measure the wrong outcomes. They've learned that companies focused on cost-per-hour create environments where success gets defined by hours billed rather than problems solved.

Procurement considers this a win. They'll find someone at the target rate.

Two months later, you engage a $245-per-hour contractor. Five months and $196,000 later, you have systems that work in controlled conditions but break under load. The lead scoring model produces inconsistent results. The reporting automation requires manual intervention at two points. Your team can't maintain what was built.

You spend another three months and $88,200 trying to salvage it. Then you start over.

Total cost: $284,200 in contractor fees, eight months of competitive disadvantage, and you're back where you started.

Informatica's CDO Insights 2025 survey identifies shortage of skills as a top obstacle to AI success (35%), with data quality and technical maturity tied at 43% each ¹⁰. When procurement optimizes for cost-per-hour rather than capability-per-outcome, they systematically filter out people who could solve the technical maturity problem.

Your competitor didn't negotiate on hourly rate. They contracted for results. Their specialist deployed working systems in seven weeks. Your team is troubleshooting failures in month eight.

Why Your Interview Process Can't Detect This

Both candidates say identical things. "Experience with AI tools for marketing automation." "Implemented AI-driven workflows to improve efficiency." They discuss automation confidently. They present as sophisticated about the technology.

The difference only becomes visible when you ask them to build something.

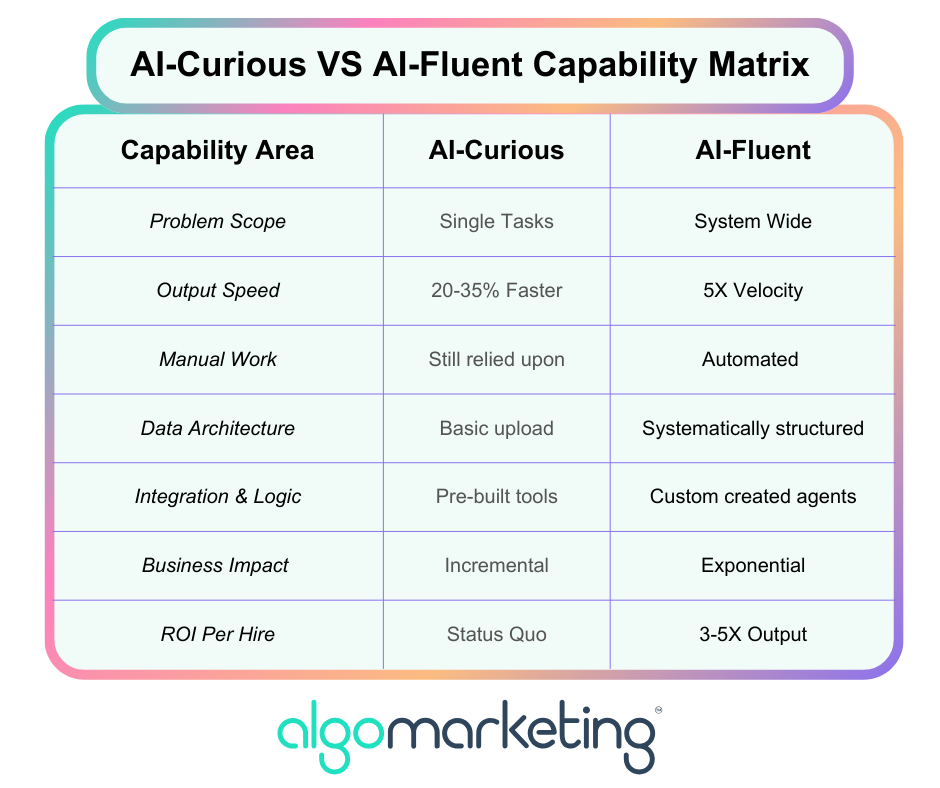

AI-fluent means the ability to architect lead scoring models that route prospects based on predictive fit. To create content systems that generate, test, and optimize variants at scale. To deploy reporting pipelines that surface insights without manual intervention. To architect integrations between AI capabilities and existing martech infrastructure.

These skills require systems thinking, integration logic, workflow automation expertise, and deep understanding of how AI models behave under production load. None of which surfaces in a 45-minute interview.

One candidate has used ChatGPT to draft email copy. The other has built custom GPT instances that auto-pull from your CMS, generate ten variants optimized for different segments, and cut production time by 78%. Both answers sound equally impressive.

Forty percent of enterprises lack adequate AI expertise internally to properly evaluate AI capability ¹¹. Hiring managers can't distinguish between someone who has architected production systems and someone who has used consumer AI tools. The gap becomes apparent in month three, not during interviews.

By then, the visible costs are sunk. The hidden costs are accumulating.

The specialists who can actually architect enterprise AI systems aren't browsing job boards. They're deployed on outcome-based engagements with organizations that figured out how to reach them. They evaluate opportunities differently. Will this organization support what I'm building? Can I deploy capability without bureaucratic friction? Will success be measured by systems that work or hours I logged?

Traditional hiring processes filter them out. Job postings optimized for keyword matching. Recruiting agencies optimized for filling seats. Procurement teams optimized for lowest hourly cost.

The infrastructure can't reach autonomous professionals who choose project-based work strategically.

What's Actually Required

The organizations maintaining competitive advantage aren't doing anything magical. They've simply rebuilt their talent infrastructure to match how elite AI capability actually operates in 2025.

Here's what that requires:

Speed to deployment, not cost per hour. The advantage isn't saving $50 per hour on contractor rates. It's deploying capability in two weeks instead of seven months. Every week of delay is another iteration cycle lost, another set of learnings your competitor captures, another step behind in the compounding advantage race.

Most procurement systems can't measure this. They're built to optimize cost per hour, not speed to market impact. The infrastructure treats all contractors as interchangeable and negotiates rates as if you're buying commodities. It filters out the specialists who could actually solve your problems because it's measuring the wrong variable.

Outcome-based engagements, not time-based employment. Contract for "three automated workflows that reduce manual reporting from 22 hours weekly to 2 hours, with documented processes and knowledge transfer." Not "someone for six months at an hourly rate."

Success gets measured by whether the system works and whether your team can maintain it. Not by whether someone logged their hours correctly or attended enough meetings.

Most organizations don't have contract templates for this. They have employment agreements designed for permanent headcount. They have vendor management systems built for temp workers. They have approval processes that assume all work gets measured in hours, not outcomes.

Deployment infrastructure, not hiring pipelines. The specialists who can architect AI systems at scale aren't filling out job applications. They're already deployed on outcome-based engagements. They choose projects based on whether the organization can actually use what gets built. Whether they'll have the authority to architect properly. Whether success will be measured by systems that work or compliance theater.

Your hiring pipeline can't reach them. It's optimized for candidates seeking traditional employment. Job postings that screen for keywords. Interviews that can't distinguish between ChatGPT users and systems architects. Procurement teams that negotiate hourly rates before understanding business impact.

Change management infrastructure, not just technical deployment. Technology is 30% of the challenge. Organizational behavior change is 70%. Seventy percent of digital transformation initiatives fail to meet their objectives ¹². The technology works in demos. Failure happens in adoption.

Building the system is the easy part. Getting your team to actually use it instead of reverting to manual workflows is where most initiatives die. This requires specialists who understand workflow redesign, stakeholder management, training deployment, and the organizational antibodies that kill new processes.

Most organizations don't have this. They have change management consultants who deliver PowerPoint decks. They don't have operators who embed with teams, build systems, transfer knowledge, and ensure adoption before rolling off.

The infrastructure gap is why 95% of AI pilots fail. Not because the technology doesn't work. Because organizations can't access the people who know how to deploy it, can't structure engagements that attract elite specialists, can't measure success in ways that matter, and can't ensure adoption after systems get built.

Purchasing AI tools from specialized vendors and building partnerships succeed about 67% of the time, while internal builds succeed only one-third as often ¹³. The difference isn't the technology. It's whether you have infrastructure to deploy it.

Your competitors aren't waiting for your hiring process to improve. They've already figured out how to access capability your systems can't reach. While you're optimizing interview questions, they're deploying specialists who architect working systems in eight weeks and compress campaign production by 60%.

The gap compounds quarterly.

Below the Waterline

What you see when a marketing ops hire fails is $140,000 in salary, benefits, and recruiting costs.

What you don't see happens below the waterline. Seven months of competitive disadvantage while your competitor built data infrastructure and captured market position. Team productivity drain as peers covered gaps and managers redirected 17% of their time to supervision. Strategic paralysis as AI initiatives stalled and your board questioned capabilities. Procurement decisions that systematically filtered out people who could have solved your problems.

For executives, the question isn't whether you can optimize your hiring process. The question is whether your organization has the infrastructure to access capability that determines competitive position.

For AI-fluent specialists, the organizations worth your time already understand this. They measure outcomes rather than activity. They've designed data infrastructure, integration requirements, and change management before engaging you. They treat deployment as partnership, not vendor management.

The iceberg reveals why "AI-proficient" hires can't scale your MOps. The visible costs are a rounding error. The hidden costs determine whether you're leading your market or watching competitors scale past you.

Your hiring process was built for a different era. The capability you need to scale marketing ops in 2026 operates under different rules.

The organizations that figure out how to access it will compound their advantage quarter over quarter. The ones that don't will keep hiring "AI-proficient" candidates who can't deliver, watching competitors move faster, and wondering why their AI infrastructure spend produces no measurable return.

In 2026, that's not a hiring problem. It's an infrastructure problem.

Endnotes

1. https://salesso.com/blog/sales-ramp-up-statistics-2025-benchmarks-best-practices/

2. https://blog.quest.com/the-hidden-ai-tax-why-theres-an-80-ai-project-failure-rate/

4. https://distantjob.com/blog/bad-hire-cost/

5. https://salesso.com/blog/sales-ramp-up-statistics-2025-benchmarks-best-practices/

6. https://fortune.com/2025/08/18/mit-report-95-percent-generative-ai-pilots-at-companies-failing-cfo/

7. https://distantjob.com/blog/bad-hire-cost/

8. https://www.artificialintelligence-news.com/news/ai-chip-shortage-enterprise-ctos-2025/

9. https://blog.meltingspot.io/why-digital-transformation-projects-fail/

11. https://www.stack-ai.com/blog/the-biggest-ai-adoption-challenges

12. https://blog.meltingspot.io/why-digital-transformation-projects-fail/

13. https://fortune.com/2025/08/18/mit-report-95-percent-generative-ai-pilots-at-companies-failing-cfo/